A fresh Linux VPS gets scanned for open SSH within minutes of being assigned a public IP. I’ve watched the auth log on a brand-new Hetzner box log over 400 root password attempts in the first hour. Linux server security at the baseline level is not optional, and it’s not difficult. It just has to actually get done before anything else lands on the box.

This post is the exact hardening pass I run on every server I deploy: an SSH key on the local machine, a non-root sudo user, root SSH login disabled, password authentication turned off, and UFW set to deny everything except the ports that need to listen. About fifteen minutes from a fresh image to a server that no longer shows up as low-hanging fruit on the Internet’s scanner traffic.

Do this once, and every other security layer you stack on top, CrowdSec, fail2ban, a WAF, becomes a meaningful addition rather than a band-aid over an open door.

Why SSH keys matter more than any other hardening step

Password authentication on SSH is the single biggest reason servers get owned. Every public IP gets hammered by botnets running dictionary attacks against root, admin, ubuntu, and a few hundred other common usernames. Pick a password short enough to be guessed before the heat death of the universe and you’ll get owned eventually.

SSH keys solve this categorically. A 4096-bit RSA key is not going to be brute-forced. Once password auth is off and only key-based logins are accepted, the brute-force noise in your auth log goes from thousands per hour to functionally zero. The auth log gets quiet enough that you’ll actually notice the rare anomaly when one shows up.

The mechanics are simple. You generate a keypair on your local machine, copy the public half onto the server’s ~/.ssh/authorized_keys, and tell sshd to refuse password logins. The private half stays on your laptop, encrypted with a passphrase, and never leaves it.

Generating an SSH key on your laptop

You only need to do this once per machine, ever. The same keypair works for every server you operate.

On macOS, Linux, or Windows PowerShell, open a terminal and run:

ssh-keygen -b 4096Press enter to accept the default file location (~/.ssh/id_rsa and ~/.ssh/id_rsa.pub). When it asks for a passphrase, set one. A passphrase encrypts the private key on disk, so even if your laptop is stolen the attacker can’t immediately use the key against your servers.

Warning: Running

ssh-keygenwithout a custom filename overwrites the existing keypair at~/.ssh/id_rsaif one exists. If you’ve used SSH before, check first withls ~/.ssh/and use-f ~/.ssh/id_rsa_newserverto write to a new path instead.

The public key, the half that ends in .pub, is what you’ll paste onto the server. Print it with:

cat ~/.ssh/id_rsa.pubOn Windows the path is C:\Users\<your-username>\.ssh\id_rsa.pub. The contents look like a single long line starting with ssh-rsa AAAAB3.... Copy the whole line, including the email address at the end if there is one.

Most decent SSH clients (Termius, Tabby, Putty’s PuTTYgen) can also generate keys with a GUI workflow. The output is the same format. Just don’t use the cloud-sync feature to upload your private key; keys live on the device they were generated on.

Adding your key to the server

Two paths here, depending on what your VPS provider supports.

The clean path: most cloud dashboards (Hetzner, DigitalOcean, OVH, Vultr) let you paste the public key into the provider’s UI before you create the server. The image gets your key baked into /root/.ssh/authorized_keys automatically, and you can SSH in as root on first boot. This is the path I take whenever the provider supports it.

The manual path: if your provider doesn’t have that option, you’ll do it after first login. Log in as root with the temporary password the provider emailed you, then:

mkdir -p ~/.ssh

nano ~/.ssh/authorized_keysPaste the public key on a single line, save with Ctrl+O and exit with Ctrl+X. Then fix the permissions, sshd is fussy about them:

chmod 700 ~/.ssh

chmod 600 ~/.ssh/authorized_keysIn a second terminal, test the SSH login: ssh root@your.server.ip. You should get straight in without a password prompt. If you get a password prompt instead, something is wrong with permissions or with the key contents. Don’t proceed to the next step until key-based login works.

Creating a non-root sudo user

Root is convenient and dangerous. A typo in a rm -rf runs against the entire filesystem with no warning. The convention on Linux is to do day-to-day work as a normal user with sudo privileges, and reserve direct root logins for the rare case where you really need them (most of which can also be handled with sudo -i).

Create the user. Replace simon with whatever username you want:

adduser simonYou’ll be asked for a password. This password is what sudo will prompt for, not what SSH will use, so make it a strong one and store it in your password manager. Skip the optional fields by pressing enter.

Now grant sudo:

usermod -aG sudo simonCopy your SSH key over to the new user’s home directory. The cleanest way is to do it from the new user’s session itself. First, switch to the new user:

su - simon

mkdir -p ~/.ssh

nano ~/.ssh/authorized_keysPaste the same public key. Save and exit. Set the permissions:

chmod 700 ~/.ssh

chmod 600 ~/.ssh/authorized_keysIn a second terminal, verify the new user can SSH in directly: ssh simon@your.server.ip. Once that works, run sudo whoami from inside that session. It should return root. If both work, you’re ready to disable root SSH and password auth.

Warning: Do not log out of your existing root session until you’ve verified that the new sudo user can SSH in and run sudo. Locking yourself out of a fresh VPS is a 30-second mistake that takes 30 minutes of console-recovery to fix.

Locking down sshd_config

This is the file that controls how the SSH daemon behaves. Open it:

sudo nano /etc/ssh/sshd_configThere are three lines to change. Find each one (Ctrl+W in nano searches), uncomment it if needed, and set the value:

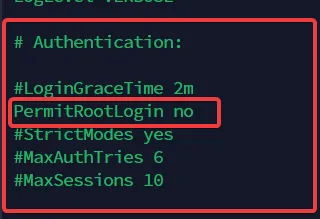

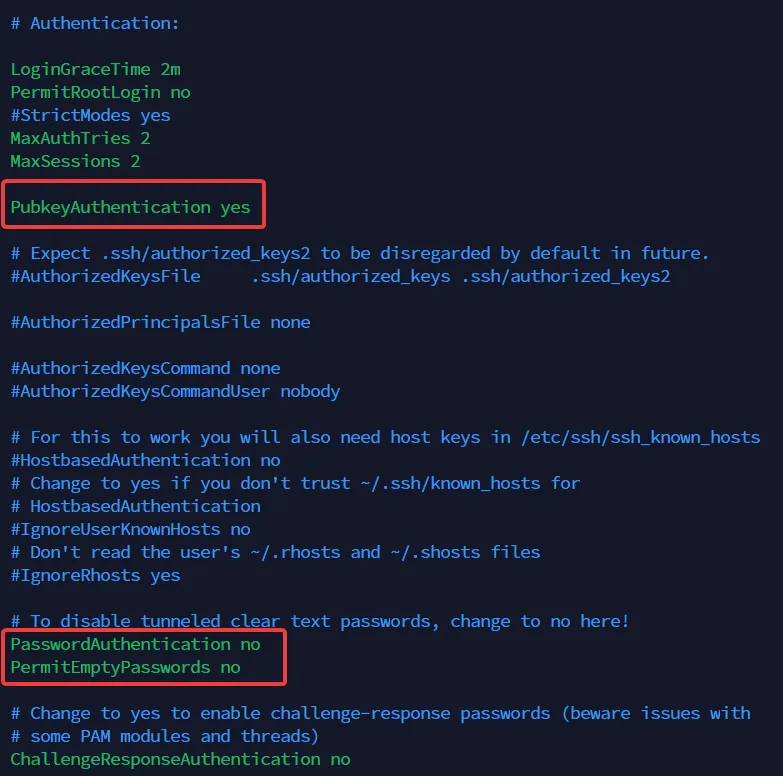

PermitRootLogin no— root cannot SSH in directly anymore.PasswordAuthentication no— only key-based auth is accepted.PubkeyAuthentication yes— public-key auth is enabled (usually already on, but verify).

The PermitRootLogin directive flipped from the default to no. After this, root cannot open an SSH session directly.

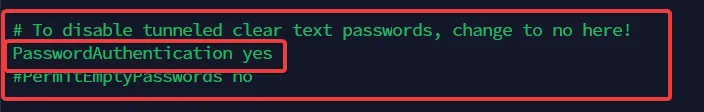

If you’re transitioning a server that legacy users still log in to with passwords, leave PasswordAuthentication yes for the moment, finish migrating those users to keys, then come back and flip it.

The transitional state with password auth still enabled. Acceptable for an hour while you migrate users; not acceptable as the long-term setting.

Once every account that needs access has its key in place, lock the config to public-key only:

The end state I run on every production box: PermitRootLogin no, PasswordAuthentication no, PubkeyAuthentication yes. Brute-force traffic against this config is wasted bytes.

Save and exit, then reload sshd to apply:

sudo systemctl reload sshdI prefer reload over restart here, it leaves existing sessions intact in case I made a typo. In a second terminal, test the new state: try logging in as root (ssh root@your.server.ip). It should be refused. Try logging in as your sudo user with the key, it should succeed. Try logging in with the password explicitly (ssh -o PreferredAuthentications=password simon@your.server.ip), it should also be refused.

If all three behave as expected, you’re done with sshd.

Installing UFW and writing the firewall rules

UFW (Uncomplicated Firewall) is a friendly wrapper around iptables that ships with Ubuntu and Debian. It’s not the most powerful firewall on Linux, but it’s the right tool for a single-host web server. Anyone who’s ever stared at a 60-line iptables rule chain at 2am will understand why “uncomplicated” matters.

Install it:

sudo apt install ufw -yIf you don’t use IPv6 on this server, edit the default config and disable it. A half-configured IPv6 stack is worse than a fully disabled one because UFW will silently let v6 traffic past rules you wrote for v4.

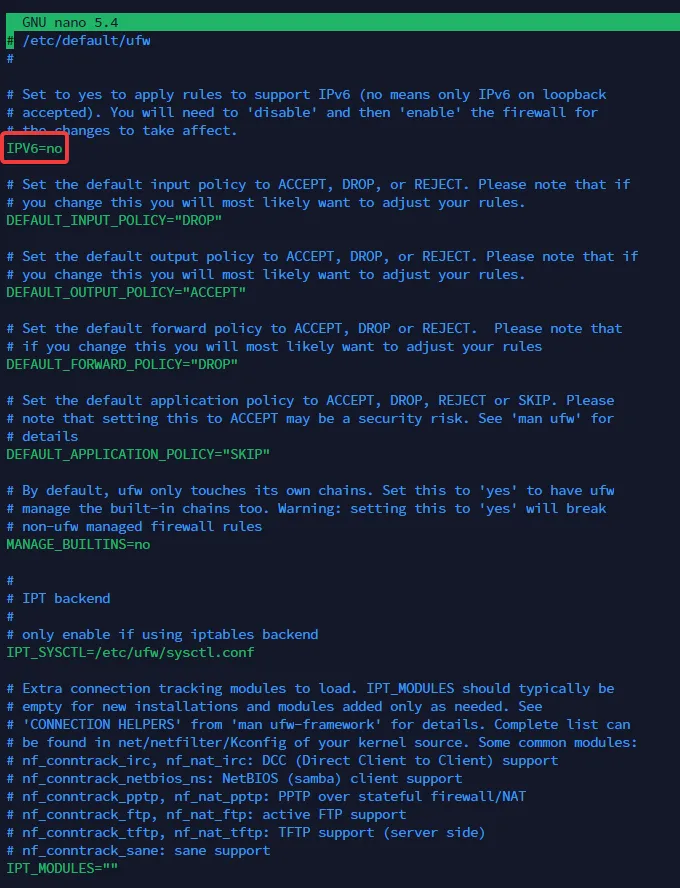

sudo nano /etc/default/ufwSet IPV6=no, save, exit.

The IPV6 directive set to no in /etc/default/ufw. Skip this step if your server actually serves traffic on IPv6.

Reset any existing UFW rules so we start from a known state:

sudo ufw resetConfirm with y. Now set the defaults: deny all inbound, allow all outbound. This is the right default for any server that isn’t a router.

sudo ufw default deny incoming

sudo ufw default allow outgoingAllow the three ports a typical web server needs: SSH on 22, HTTP on 80, HTTPS on 443:

sudo ufw allow 22 && sudo ufw allow http && sudo ufw allow httpsIf you have a static office IP, lock SSH down further so it only accepts connections from that source:

sudo ufw allow from 203.0.113.42 to any port 22Replace 203.0.113.42 with your actual public IP. Be very sure of the IP before you commit, this is another way to lock yourself out of a fresh box.

Enable the firewall:

sudo ufw enableConfirm with y. UFW will warn that this may disrupt existing SSH sessions. If you allowed port 22 above, your session is fine.

Verify the rules:

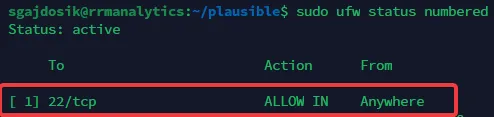

sudo ufw status numbered

The expected ufw status numbered output: 22, 80, and 443 allowed inbound; everything else denied by default.

Make sure UFW starts on boot:

sudo systemctl enable ufwThat’s it. The firewall now blocks every port except the three you explicitly opened.

What to install next, and what to skip

This baseline gets you to a server that isn’t actively being scanned-into. The next step in my standard build is CrowdSec, which adds behavioural detection on top of the firewall. CrowdSec watches your auth logs, web server logs, and (with bouncers) Nginx itself, and bans IPs that exhibit attack patterns. I’ve written a full walkthrough at CrowdSec installation and server protection, and a follow-up specifically for WordPress sites.

Things I deliberately don’t bother with on a single-server agency setup:

- AppArmor / SELinux profile tuning. Worth it on multi-tenant infrastructure, overkill on a single web host. The default Ubuntu AppArmor profiles are already on; leave them.

- Custom kernel hardening (sysctl tweaks for tcp_syncookies, etc.). The defaults in modern Ubuntu kernels are fine. The marginal gain doesn’t justify the debugging surface.

- Disabling root entirely with passwd -l. I leave the root account active so I can use

sudo -ito escalate when I need to. Disabling it is theatre on a server where nobody can SSH in as root anyway.

What I do add to the baseline, post-hardening: unattended-upgrades for security patches, a separate VPN (Wireguard, Mistborn, or Wireguard Easy) for admin access, and 2FA on every dashboard the server fronts. Tools like 2FAuth and Authentik are how I close the rest of the loop.

For the broader picture of why the human layer matters as much as the server layer, the human element in cybersecurity defense post is the companion to this one. And if you’re running WordPress on top of this stack, the comprehensive WordPress security guide walks through the application-layer rules you’ll want next.

Closing the loop

The hardening checklist in this post is short, deliberately. SSH keys, sudo user, root login disabled, password auth disabled, UFW with sane defaults. Five steps, fifteen minutes, and a server that’s no longer the easiest target on its subnet.

Everything else, intrusion detection, log analysis, behavioural firewalls, application-layer rules, builds on top of this baseline. Skip the baseline and the rest doesn’t matter much. Get the baseline right and the rest becomes worth doing.

If you’ve been running servers without this layer locked down, the right time to fix it is before the next provisioning, not after the next incident.